For artificial intelligence (AI) to enter the mainstream of education, instructional designers must have design methodologies that enable them to efficiently create AI-driven learning products. The most commonly used instructional design methodology, ADDIE (analyze, design, develop, implement, and evaluate), does not address the needs of AI-driven learning systems, because it adequately define the role of data in the design process. In fact, none of the instructional design methodologies commonly used today take into account the opportunities and challenges that AI and big data bring to the design process.

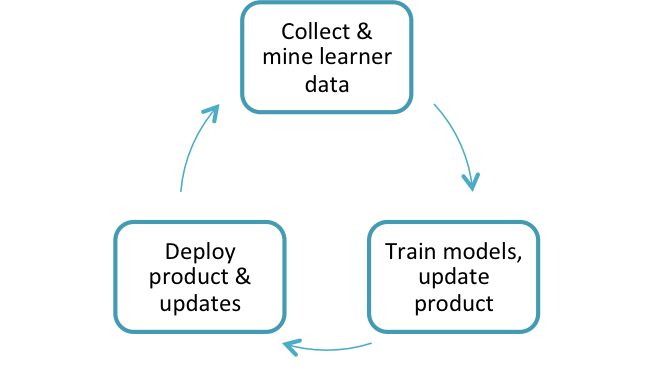

Data-Driven Development (D3) is a methodology that takes full advantage of data and machine learning. Data informs design very early in the process and continues throughout the product life cycle. Evaluation takes place constantly. Designs iterate rapidly, with the speed of iteration limited only by the time it takes to apply machine-learning methods and retrain the underlying AI models.

The first step is to collect data samples from target learners, ideally during the preparation phase of the project. Data can come from other data-driven learning systems or data repositories such as the CMU Data Shop. Some data-preparation steps may be needed to render the data into a form suitable for processing by machine-learning algorithms. The development team mines the data for insights about learner behavior and learner needs.

An initial prototype of the learning product should also be used as a data-collection tool. Test users should be representative of learners as well as teachers. Online services such as Mechanical Turk can be good sources of test users.

Once a data set has been collected and prepared, it is used to train and test the AI models embedded in the learning product. The development team then updates the learning product to incorporate the new models and other software enhancements. This can take place multiple times, both before formal launch of the product and after the system starts collecting data from the target learners.

Once the product is deployed, new versions of algorithms and models can run and be tested in the background on the same data collected from learners, to compare against the released versions. This further accelerates product iteration.

In the D3 model evaluation is an ongoing process and not a separate stage as in ADDIE. A series of snapshot evaluations simultaneously test learning effectiveness, test new versions of the product, and collect data sets for use in planned product improvements. For example, Alelo recently conducted a snapshot evaluation of Enskill® English with students in an English for specific purposes (ESP) program at the University of Novi Sad in Serbia. We tested the effectiveness of the released version of Enskill English, tested the performance of a new version of the Enskill dialogue system that had yet to be released, and analyzed samples of learner speech to inform future extensions to Enskill. The evaluation provided evidence that (a) Enskill is helpful for learning spoken English skills, (b) learner performance improved through repeated practice, and Enskill can be extended to meet the needs of ESP students who want to learn to speak English in a professional context.